Table of Contents

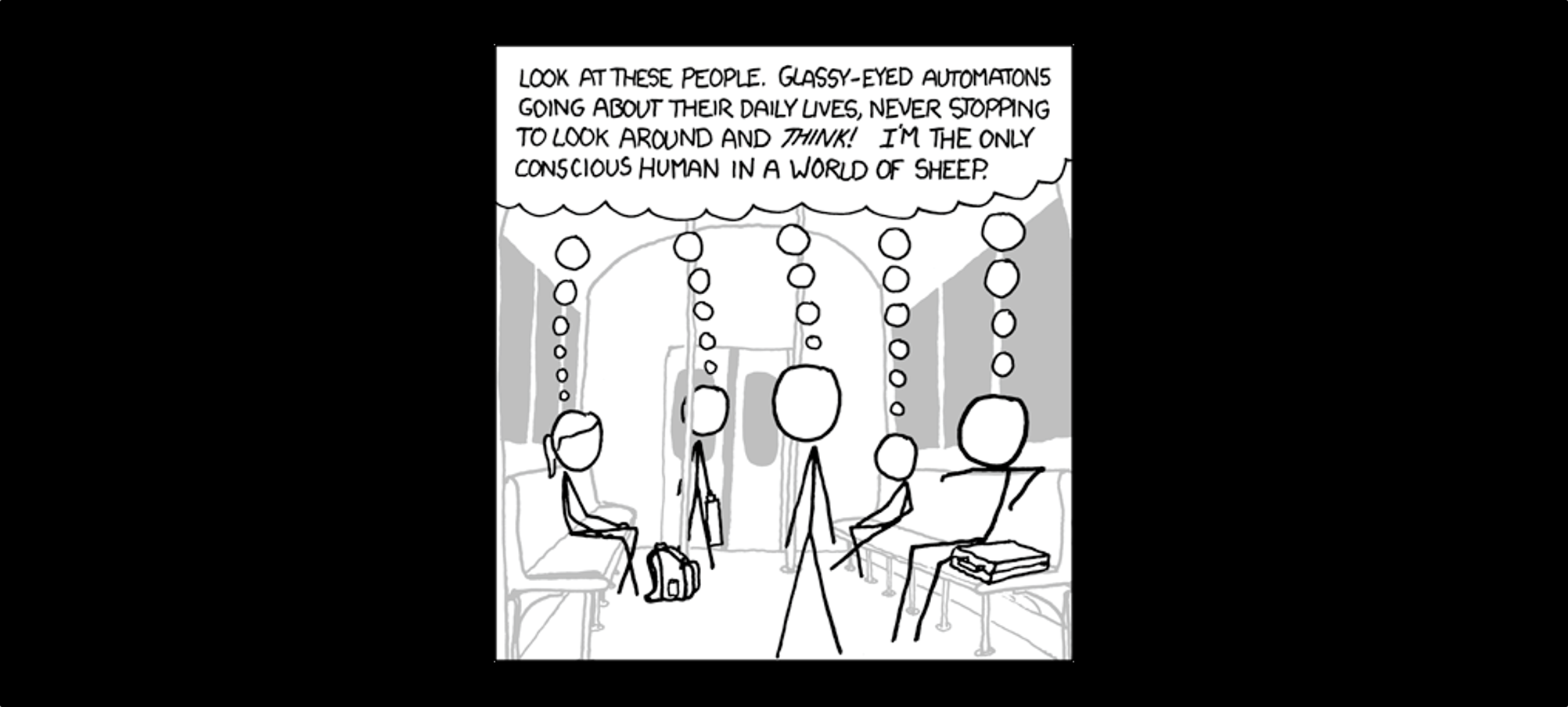

There is a lot of talk circulating around the internet these days about not being sheep. This sense of the word emphasizes that we ought not blindly follow the people in front of us and follow the crowd to our destruction. The corresponding Biblical injunction would probably be Jesus’ command to be “as wise as serpents.”

The problem is that the Bible says we are sheep by nature – we are predisposed to follow a crowd, especially a crowd we already trust. Ironically, a lot of the people on my Facebook feed shouting “don’t be sheep!” are backed up in their comment thread by a dozen adherents who clearly share their identical beliefs. They aren’t brilliant thought leaders; they are just in a different pack of sheep. A study of religious history will reveal no shortage of obscure heretics and cults which flame up and burn out, each fervently convinced that they have truth and all others have lost the true way.

The good news is that there are things we can do to follow the Biblical command to be wise – very practical things that will help us with both our politics and our theology.

1. Don’t be so sure

It’s amazing how quickly we lose sight of the danger of pride. Despite around 50 biblical references to the danger of this sin, we somehow think that humility has no place in theological or political discussions.

The fact is that there is not a single person on earth who holds the correct opinion on every issue, and I doubt anyone is particularly close (I am certainly not close). The Bible gives good examples of people who were open to learning.

Apollos was a great young evangelist, yet Acts records that “[When] he began to speak boldly in the synagogue: whom when Aquila and Priscilla had heard, they took him unto them, and expounded unto him the way of God more perfectly.” Apollos was a man on a mission, yet he needed to be instructed. Peter was a man in authority in the early church, and he had spent years learning from the Master himself. Yet, Paul records an occasion where “when Peter was come to Antioch, I withstood him to the face, because he was to be blamed.” Paul publicly rebuked Peter for racism against the Gentiles, and Peter took the correction.

In some way, we are all like Apollos or Peter, either needing to be taught that we don’t know everything yet or rebuked in that something we thought we knew was dead wrong. In either event, we need to bring humility to table from the outset and acknowledge that in discussing a broad topic, it’s not a matter of “if” we are wrong about some facet, it’s merely a matter of which facet we are wrong about.

How do we do this practically? I personally like to express the strength of my beliefs along a range of confidence. From my study of economics, I’m nearly certain that free trade results in more wealth for both countries involved, I’m fairly sure the excess wealth will be realized within one generation, and I suspect that economic sanctions on countries we don’t like are a waste of time and a policy we should discard. That is a broad issue on which I shared three beliefs, each with a different level of confidence. If we had a debate about the issue, you would much sooner talk me out of the third belief than the first, though I admit that I could be wrong on any point.

Too many people frame all their beliefs as certainties. They are sure that the man the cop shot was a thug, or that the cop was a racist, after watching a sixteen second video clip. They are sure of what a verse in Daniel means, despite the very confusing context. Certainty should be correlated with evidence – the less evidence you have, the more loosely you should hold a belief.

Given that we hold some beliefs loosely and some tightly, how should we determine whether to believe an idea in the first place?

2. Falsify before you verify

I could expound at length on a wide variety of techniques to identify logical fallacies, analyze a scientific study, or understand statistics. While all of that would be valuable, there is one simple tool that will cut through 90% of the insane ideas that sane people fall for.

Imagine a theft is committed and you are tasked with evaluating one of the suspects. You may search for evidence to see if he committed the crime. He is the right height and build as the figure in the grainy security footage captured at the scene. His shoe is also the same size as the footprint found. His financial situation would give him motive. So, is he the thief? You’ve been looking for evidence that suggest he did commit the crime. You have been trying to verify that he is the thief.

But the fastest way to determine whether he is the thief is to look for reasons why he did not commit the crime. If you find hard evidence that he was in Brazil when the crime was committed in Wisconsin, then you have falsified the claim that he was the thief. This is why the first thing investigators do is look for an alibi – a reason why the suspect didn’t commit the crime – before they waste their time matching shoeprints and looking for motive.

To falsify means to attempt to disprove. You should attempt to disprove any belief that you want to be sure is right. If you can’t disprove it, only then you can look for evidence to prove it or verify it, and after both processes you can be as confident as possible that it is true. If someone tells you that baptism is necessary for salvation, you don’t do a word search in your Bible for “baptism,” you go read all the verses about salvation which don’t mention baptism at all.

You can use this technique on any belief, whether it is widely held or obscure, however, it makes sense to be more skeptical of things that shock your pre-existing beliefs or of a conclusion which few people have reached. If you learn something which makes you say, “I can’t believe that!” you should probably attempt to falsify it before you share it on Facebook.

Can any belief stand up to a skeptical attempt to falsify it? Of course. The truth holds up quite nicely. When I try to falsify the existence of God by seeing what atheists have to say, I find their arguments unconvincing. When I give the atheists the same skeptical test, I find that intelligent design/ creation accounts for a lot of things in nature that the atheists cannot.

Here are a few more specific ways to use falsification as a tool to evaluate an idea.

A. Choose a sample of straightforward claims to falsify

I was recently handed a document full of anti-vaccine claims to evaluate. Some of them are exceedingly difficult to falsify. It’s hard for me to disprove that big pharma companies are secretly conspiring with the government to infect us. However many of the claims in the paper were of the sort that one could look up. For instance, they cited a scientific study, which they said showed that the flu vaccine was 100% ineffective. This stuck me as rather unbelievable, so I looked up the study. Sure enough, it was from a reputable source, and it was a large study, but the anti-vaxer had completely misrepresented the conclusion. The opening line of the conclusion was to the effect of “We determined that the flu vaccine was effective in preventing the flu.” Either the anti-vax paper was intentionally lying, or just as likely, the author had no idea of how to read a scientific study, and just read the headline. I found five or six such easily falsifiable claims in the three-page document, and with the weight of that evidence, I decided that their unprovable claims were most likely as weakly grounded as their allegedly provable ones.

B. Evaluate the bigger picture

Many times the purveyors of wacky theories are highly focused on minute details, such as the way the flag ripples in the breezeless atmosphere of the first moon landing photos. However, the easiest way to falsify something is often to step out of those details and start to ask questions about the bigger picture. There were six crewed moon landings from 1969-1972. If the first one was faked, were the subsequent five faked? If the subsequent five weren’t faked, and landing on the moon was possible in November of 1969 (the second landing), then why wasn’t it possible in July of 1969 (the first landing)? If all six were faked, why is there almost no analysis of evidence of chicanery in the other five? Why would the government take on the risk of faking the same thing six times? Surely faking it once or twice would be sufficiently risky. Also, there was a failed mission to land on the moon (Apollo 13) which seems like a rather absurd thing to fake if the purpose of faking the moon missions is to create bragging rights vis a vis the Soviets.

Regardless of whether there is a good answer to why the flag appears to be waving in the breeze (there is) in the bigger picture, believing that six and a half moon landings were faked falls apart.

Lots of beliefs can be falsified with this big picture treatment. Nearly two decades after 9/11, the conspiracy theorists can wax poetic about trace evidences of thermite incendiary devices (which could also be evidence of boring old primer paint) but they still can’t give a decent answer to the question “why would the US government attack itself?” The war on terror has been extremely costly, it didn’t require 9/11 as a justification anyways, and it’s difficult to see who benefited from it in the US government if terrorism was never a real threat.

C. Make note of predictions

Another great way to falsify a source is to keep track of its predictions. Keep in mind that a prediction can be right by chance. On any given day, there is someone predicting an economic collapse, a pandemic, an ice age, and an earthquake that breaks California off into the ocean. Eventually, some of these things are bound to happen, and whoever “predicted” them most recently will get the credit for being a sage. However, wacky theories are often tied in with numerous predictions about the future that age very poorly.

When I was in high school, a local talk radio show spent hours and hours convincing its listeners that there were terrorist training camps all across the US, planning a massive attack. 12 years later, nothing materialized. I remember someone who strongly believed (based on some emails forwarded to them) that the Euro was going to dramatically collapse in value any day. I didn’t dispute this claim, I just set a reminder on my phone to check the Euro value in 18 months. Now, nearly four years later, the Euro is still holding steady, and I can dismiss this claim and its source as bunk.

Predictions can be tricky, because a lot of times they are dismantled slowly over time as the evidence reveals the opposite, so that by the time the evidence is in, the prediction has been very deliberately hidden from view and memory. Because of this ability to walk back longer-term predictions over time, short-term predictions tend to be the sincerest. For instance, the stated reason for the US invasion of Iraq was to prevent their proliferation of “Weapons of Mass Destruction” (WMDs). Upon arrival, it was soon concluded that Iraq did not have as many WMDs as was feared, and the Bush administration was lambasted for that failed prediction (which if we’re getting conspiratorial, why wouldn’t the Bush administration simply fake the WMDs, if faking is such an easy thing?) This can be taken as evidence that the Bush administration was sincerely wrong, but wrong none the less. The prediction you may have long ago forgotten about was the once common knowledge on the political left that the “secret reason” the US invaded Iraq was the oil. How is that prediction holding up now? Simple research about the current Iraqi oil industry reveals that out of 23 active oil contracts in Iraq, US companies only hold two of them compared to five held by the Russians and Chinese. If the US went to war for oil, why didn’t we take any of it?

The same logic applies to biblical matters, especially end time theories. Just to cite one example, a website which calls itself “Prophecy Central (Bible-prophecy.com)” is talking about how coronavirus marks the end of the world. While they are not a Pentecostal Holiness site, they are linked to as a recommended resource by Holiness-Preaching.org and are doubtless used as a resource by many other denominations as well. Maybe they are right about COVID-19; all I can do is mark it on my calendar. But what is far more interesting is to look at the internet archive of their website, which took snapshots of the site over time, all the way back to 1998. When you look at the site over time, you see they did a lot of predicting and conjecturing that they don’t talk about anymore. Stories range from the imminent collapse of the southern wall of the temple mount and subsequent possible apocalypse (in 2002), to the imminent attack on America (in 2005), to the imminent unveiling of the long-lost ark of the covenant (in 2009).

Is the mark of the beast about to be rolled out in a coronavirus vaccine? I would encourage you to put a reminder on your calendar five years from now and see how that theory is working out. You can mark this down as a prediction from me – no, it isn’t. I could provide a dozen reasons for that, but in brief, in order for it to be the “mark of the beast,” everyone must be forced to receive it with no exceptions in order to participate in the economy. We still haven’t figured out how to get the polio vaccine to everyone in the world and it has been around since 1952. American vaccination rates for most diseases are in the 60-85% range, not 100%. Also, the COVID-19 vaccines will not be administered in the right hand or forehead and they will not be proceeded by worshiping the image of a talking beast as per Revelation 13 … I could go on, but I’ll spare you.

If you want to falsify a new idea, just make a note of the predictions about the future and don’t let the predictor keep burying old predictions and selling you new ones.

3. Leverage bias to find truth

The best way to get you information is not from unbiased sources. You read that right. Don’t look for unbiased sources on current events. It’s like getting your information from leprechauns. They don’t exist, so I’m going to doubt anything you say you heard from leprechauns. The better way is to acknowledge that every source is biased, and to approach each source in full knowledge of its bias and leverage that bias to gain confidence.

This is not to say that all sources are equally valid. Clearly, Chinese state-owned media has a greater willingness to lie outright than the more competitive American media. There are numerous figures in the mainstream media who have lost credibility and their jobs for being caught lying (Dan Rather of CBS and Brian Williams of NBC). However, bias can also show itself in ways besides lying. Bias can also choose which stories are covered and which stories are buried, even if the ones brought to light are covered honestly.

So, if we don’t ignore biased sources, how do we use them? It’s as simple as shooting arrows with a crosswind; you adjust your point of aim to account for the wind. You observe the bias and adjust for it. The nice thing is that if a biased source admits something that goes against its bias, which they often do when the evidence is overwhelming, you can be very confident it is true. When I read in a secular publication that Christianity is growing rapidly in the developing world, I can be sure it is, because they would have no reason to exaggerate it and plenty of reason not to mention it at all.

Consider this practical application. If you search Christian sources for evidence of the Bible in archaeology, you will find long lists of “proofs of Scripture,” some of which are true and some of which are a bit … stretched. If you start with one of those lists and you compare some of those finds against a skeptical source, like National Geographic, you will find that many stand up to scrutiny, a few will fall off, and a few will be inconclusive. But this is good news! Because out of a list of 15 archaeological finds from the Bible, you will find 10 that even secularists also admit are valid. If they thought they weren’t valid, the secularists would certainly tell you. If a Mormon ran the same exercise for a list of “archaeological finds supporting the Book of Mormon,” he couldn’t stay a Mormon for long, because the critical sources would debunk all of the finds as nonsense. This example shows how leveraging biased and critical sources can lead you to truth.

The other nice thing about engaging with sources from a bias that conflicts with your own is that they will often raise an argument from logic. Arguments from logic can be faulty, but they can not be “fake.” I can lie to you about a statistic or an anecdote. I can’t lie with a logical argument. If my argument is invalid, you should be able to point out in what way it is invalid, with no further research. Let’s say your friend is convinced that elections in America have all been rigged for decades. She finds a source that responds with this logical argument: “If elections are routinely rigged, why does power keep changing hands between the Democrats and the Republicans? If someone is rigging the elections, they would have a goal in mind and not vacillate between conflicting objectives. Therefore, no one is routinely rigging the elections.” This argument from logic relies on no facts outside of common knowledge. Your friend can’t simply dismiss it based on the source, because the source of a logical argument is irrelevant to the logic itself, she has to address it to dismiss it.

The key to research is not to find some holy grail of a completely unbiased source. If you think you’ve found it, you’ve been duped. The key is to see the bias and work with it.

Conclusion

The simple ways to not be easily influenced by falsehood are: to keep an open mind, to research new ideas with an eye to finding out why they are wrong, not why they are right (by looking for things you can fact check, exploring the bigger picture, and making a note of predictions), and to realize that you must use biased sources, but you can adjust for their biases to gain confidence in their conclusions.

There are many more research tools you can learn over time, but these will get you started. If you use these tools, you will, at least at the outset, be less certain about all your beliefs. That means that a conversation with you is less likely to devolve into Hitler analogies. It means you’re more likely to change your mind and reject some ideas that are popular in your circles (you might even have to go against the flow). Eventually, you will hold your core beliefs with even more certainty than before, because you have tested them.

“Sheeple” are more concerned about being certain than they are about being right – so they don’t try to falsify any of their beliefs, for fear that they might have to change them. Don’t be a sheep. Finding truth is more valuable than being right in your own mind.

-Nathan Mayo

Very well written article. I think I agree largely with your premise. There are some important points here that we all need to keep in mind in a time when we are continually bombarded with information from countless sources. I find it disturbing how so many repeat “information” found online with little effort to verify it. I did notice the reference to the “tacit endorsement” of prophecy central by the Holiness preaching site. I was curious about the relevance of this to the article. I am not familiar with the prophecy central site, but I saw that it was listed as a resource on Holinesspreaching.org. Did you happen find out if this resource (prophecy central) is also recommended by any websites from other denominations or church groups? I guess I am just asking why the particular focus on the Holiness preaching site?

Hi Cody, Thanks for your feedback. The only reason I referenced the Holiness connection is because the majority of the audience for this website are people from a Holiness background and so I try to connect the articles to that broader topic. I am fairly sure that Prophecy Central is not from the Holiness Movement and I do not think that people from the Holiness movement are the worst offenders when it comes to bad predictions and over-confident eschatology. I think that is a human trait, not a Holiness one. However, I wanted to show that I wasn’t discrediting a site which no one had ever heard of, but rather one which is at least mildly influential in the Holiness Movement. In order to be more even-handed, I have changed the text you referenced to this: “While they are not a Pentecostal Holiness site, they are linked to as a recommended resource by Holiness-Preaching.org and are doubtless used as a resource by many other denominations as well.”

I really appreciate your honesty and fairness here. And I’m definitely on board with bad predictions and over-confident eschatology being a universal human trait!

God bless.